[ad_1]

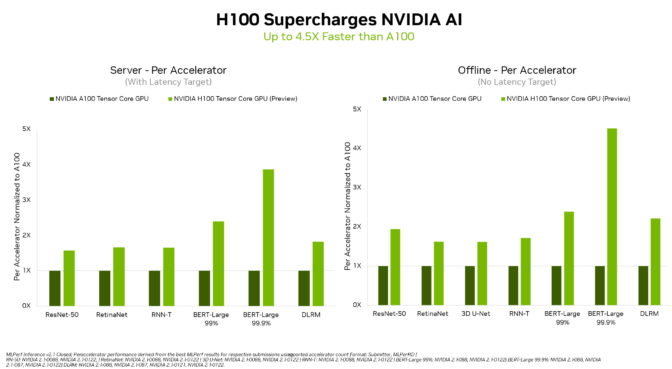

In their debut on the MLPerf industry-standard AI benchmarks, NVIDIA H100 Tensor Core GPUs set world information in inference on all workloads, delivering as much as 4.5x extra efficiency than previous-generation GPUs.

The outcomes exhibit that Hopper is the premium alternative for customers who demand utmost efficiency on superior AI fashions.

Additionally, NVIDIA A100 Tensor Core GPUs and the NVIDIA Jetson AGX Orin module for AI-powered robotics continued to ship general management inference efficiency throughout all MLPerf checks: picture and speech recognition, pure language processing and recommender methods.

The H100, aka Hopper, raised the bar in per-accelerator efficiency throughout all six neural networks within the spherical. It demonstrated management in each throughput and pace in separate server and offline situations.

The NVIDIA Hopper structure delivered as much as 4.5x extra efficiency than NVIDIA Ampere structure GPUs, which proceed to supply general management in MLPerf outcomes.

Thanks partly to its Transformer Engine, Hopper excelled on the favored BERT mannequin for pure language processing. It’s among the many largest and most performance-hungry of the MLPerf AI fashions.

These inference benchmarks mark the primary public demonstration of H100 GPUs, which can be out there later this 12 months. The H100 GPUs will take part in future MLPerf rounds for coaching.

A100 GPUs Show Leadership

NVIDIA A100 GPUs, out there right now from main cloud service suppliers and methods producers, continued to indicate general management in mainstream efficiency on AI inference within the newest checks.

A100 GPUs received extra checks than any submission in knowledge heart and edge computing classes and situations. In June, the A100 additionally delivered general management in MLPerf coaching benchmarks, demonstrating its talents throughout the AI workflow.

Since their July 2020 debut on MLPerf, A100 GPUs have superior their efficiency by 6x, because of steady enhancements in NVIDIA AI software program.

NVIDIA AI is the one platform to run all MLPerf inference workloads and situations in knowledge heart and edge computing.

Users Need Versatile Performance

The means of NVIDIA GPUs to ship management efficiency on all main AI fashions makes customers the actual winners. Their real-world functions usually make use of many neural networks of various sorts.

For instance, an AI utility might have to know a person’s spoken request, classify a picture, make a advice after which ship a response as a spoken message in a human-sounding voice. Each step requires a special kind of AI mannequin.

The MLPerf benchmarks cowl these and different widespread AI workloads and situations — pc imaginative and prescient, pure language processing, advice methods, speech recognition and extra. The checks guarantee customers will get efficiency that’s reliable and versatile to deploy.

Users depend on MLPerf outcomes to make knowledgeable shopping for selections, as a result of the checks are clear and goal. The benchmarks get pleasure from backing from a broad group that features Amazon, Arm, Baidu, Google, Harvard, Intel, Meta, Microsoft, Stanford and the University of Toronto.

Orin Leads on the Edge

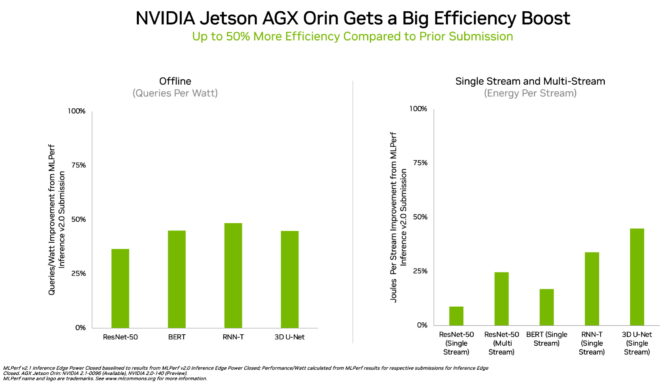

In edge computing, NVIDIA Orin ran each MLPerf benchmark, profitable extra checks than some other low-power system-on-a-chip. And it confirmed as much as a 50% acquire in vitality effectivity in comparison with its debut on MLPerf in April.

In the earlier spherical, Orin ran as much as 5x quicker than the prior-generation Jetson AGX Xavier module, whereas delivering a mean of 2x higher vitality effectivity.

Orin integrates right into a single chip an NVIDIA Ampere structure GPU and a cluster of highly effective Arm CPU cores. It’s out there right now within the NVIDIA Jetson AGX Orin developer equipment and manufacturing modules for robotics and autonomous methods, and helps the complete NVIDIA AI software program stack, together with platforms for autonomous automobiles (NVIDIA Hyperion), medical gadgets (Clara Holoscan) and robotics (Isaac).

Broad NVIDIA AI Ecosystem

The MLPerf outcomes present NVIDIA AI is backed by the {industry}’s broadest ecosystem in machine studying.

More than 70 submissions on this spherical ran on the NVIDIA platform. For instance, Microsoft Azure submitted outcomes operating NVIDIA AI on its cloud providers.

In addition, 19 NVIDIA-Certified Systems appeared on this spherical from 10 methods makers, together with ASUS, Dell Technologies, Fujitsu, GIGABYTE, Hewlett Packard Enterprise, Lenovo and Supermicro.

Their work reveals customers can get nice efficiency with NVIDIA AI each within the cloud and in servers operating in their very own knowledge facilities.

NVIDIA companions take part in MLPerf as a result of they realize it’s a beneficial device for purchasers evaluating AI platforms and distributors. Results within the newest spherical exhibit that the efficiency they ship to customers right now will develop with the NVIDIA platform.

All the software program used for these checks is out there from the MLPerf repository, so anybody can get these world-class outcomes. Optimizations are constantly folded into containers out there on NGC, NVIDIA’s catalog for GPU-accelerated software program. That’s the place you’ll additionally discover NVIDIA TensorRT, utilized by each submission on this spherical to optimize AI inference.

Read our Technical Blog for a deeper dive into the expertise fueling NVIDIA’s MLPerf efficiency.

[ad_2]