[ad_1]

Aurich Lawson / Getty Images

Matrix multiplication is on the coronary heart of many machine studying breakthroughs, and it simply bought quicker—twice. Last week, DeepMind introduced it found a extra environment friendly option to carry out matrix multiplication, conquering a 50-year-old document. This week, two Austrian researchers at Johannes Kepler University Linz declare they’ve bested that new document by one step.

Matrix multiplication, which includes multiplying two rectangular arrays of numbers, is usually discovered on the coronary heart of speech recognition, picture recognition, smartphone picture processing, compression, and producing laptop graphics. Graphics processing items (GPUs) are notably good at performing matrix multiplication resulting from their massively parallel nature. They can cube an enormous matrix math downside into many items and assault components of it concurrently with a particular algorithm.

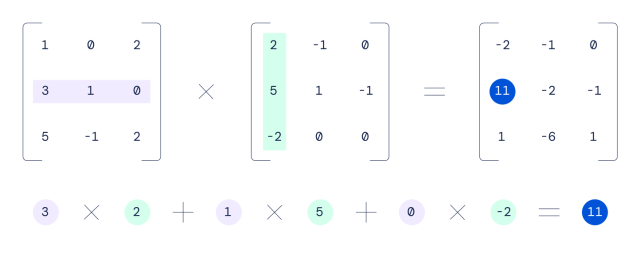

In 1969, a German mathematician named Volker Strassen found the previous-best algorithm for multiplying 4×4 matrices, which reduces the variety of steps essential to carry out a matrix calculation. For instance, multiplying two 4×4 matrices collectively utilizing a conventional schoolroom methodology would take 64 multiplications, whereas Strassen’s algorithm can carry out the identical feat in 49 multiplications.

DeepMind

Using a neural community referred to as AlphaTensor, DeepMind found a option to scale back that depend to 47 multiplications, and its researchers revealed a paper in regards to the achievement in Nature final week.

Going from 49 steps to 47 would not sound like a lot, however when you think about what number of trillions of matrix calculations happen in a GPU day-after-day, even incremental enhancements can translate into giant effectivity positive factors, permitting AI functions to run extra rapidly on current {hardware}.

When math is only a recreation, AI wins

AlphaTensor is a descendant of AlphaGo (which bested world-champion Go gamers in 2017) and AlphaZero, which tackled chess and shogi. DeepMind calls AlphaTensor “the “first AI system for locating novel, environment friendly and provably right algorithms for basic duties resembling matrix multiplication.”

To uncover extra environment friendly matrix math algorithms, DeepMind arrange the issue like a single-player recreation. The firm wrote about the method in additional element in a weblog publish final week:

In this recreation, the board is a three-dimensional tensor (array of numbers), capturing how removed from right the present algorithm is. Through a set of allowed strikes, similar to algorithm directions, the participant makes an attempt to switch the tensor and nil out its entries. When the participant manages to take action, this leads to a provably right matrix multiplication algorithm for any pair of matrices, and its effectivity is captured by the variety of steps taken to zero out the tensor.

DeepMind then educated AlphaTensor utilizing reinforcement studying to play this fictional math recreation—much like how AlphaGo realized to play Go—and it steadily improved over time. Eventually, it rediscovered Strassen’s work and people of different human mathematicians, then it surpassed them, in line with DeepMind.

In a extra sophisticated instance, AlphaTensor found a brand new option to carry out 5×5 matrix multiplication in 96 steps (versus 98 for the older methodology). This week, Manuel Kauers and Jakob Moosbauer of Johannes Kepler University in Linz, Austria, revealed a paper claiming they’ve diminished that depend by one, right down to 95 multiplications. It’s no coincidence that this apparently record-breaking new algorithm got here so rapidly as a result of it constructed off of DeepMind’s work. In their paper, Kauers and Moosbauer write, “This answer was obtained from the scheme of [DeepMind’s researchers] by making use of a sequence of transformations resulting in a scheme from which one multiplication may very well be eradicated.”

Tech progress builds off itself, and with AI now trying to find new algorithms, it is attainable that different longstanding math information might fall quickly. Similar to how computer-aided design (CAD) allowed for the event of extra advanced and quicker computer systems, AI could assist human engineers speed up its personal rollout.

[ad_2]